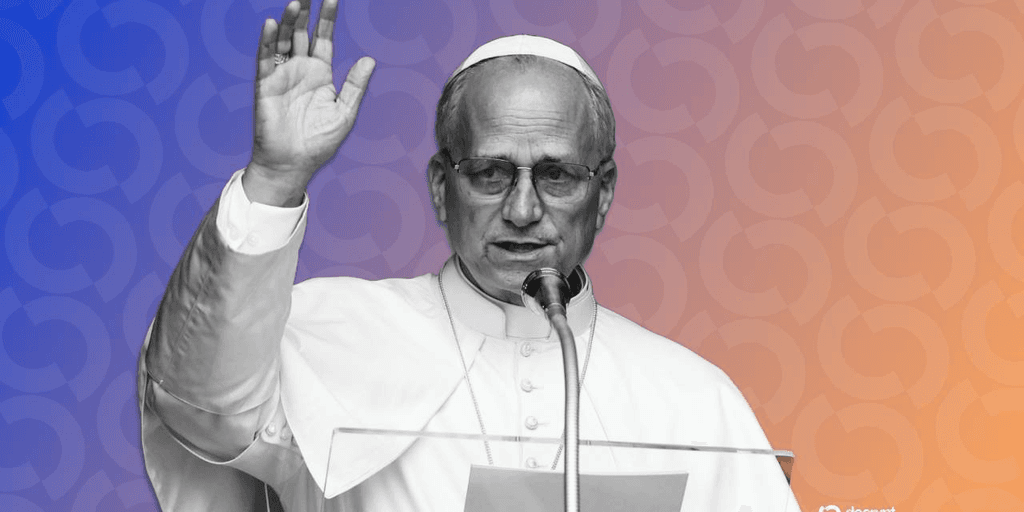

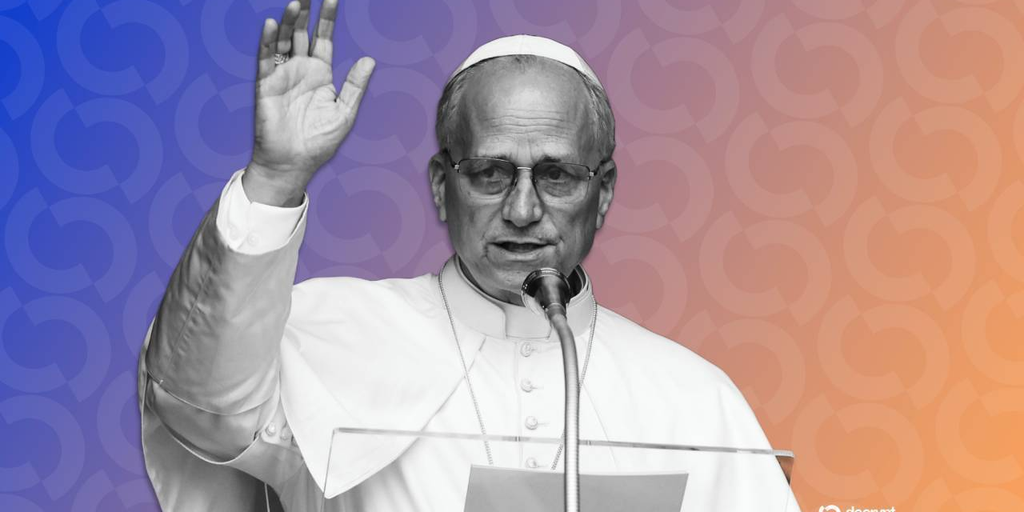

Pope's Anti-AI Warnings May Be AI-Written: A Macroeconomic Perspective

In a curious twist of technological irony, a recent incident involving the Pope's social media account has raised eyebrows and sparked debate about the authenticity of digital content. Pangram Labs, a developer of an AI detection tool, reported that several posts from the Pope’s X (formerly Twitter) account were flagged as potentially AI-generated. This revelation comes at a time when concerns about artificial intelligence's role in shaping narratives and influencing public opinion are at an all-time high.

Quick Take

| Aspect | Details |

|---|---|

| Incident | Posts from Pope's X account flagged as AI-written |

| Detection Tool | Developed by Pangram Labs, aimed at identifying AI content |

| Broader Implications | Raises questions on authenticity and digital trust |

| Potential Impact | Influences public perception and AI regulation discussions |

The Good: The Rise of AI Detection Tools

The development of AI detection tools like Pangram Labs' extension is a step towards ensuring transparency in digital communications. By identifying AI-generated content, these tools help maintain the integrity of information shared on social media platforms. This is crucial in an era where misinformation can spread rapidly, leading to societal consequences.

Moreover, the ability to discern between human and AI-generated content has implications beyond mere fact-checking. It encourages the ethical use of AI technologies and promotes accountability among content creators and platforms. In a world where AI is increasingly adopted for content creation, ensuring the authenticity of voices—especially those of public figures—becomes essential.

The Bad: Implications for Public Figures

However, the situation is complex, particularly when it involves prominent figures like the Pope. If posts intended to convey moral and ethical stances are misidentified as AI-generated, it could undermine the authenticity of those messages. This mislabeling poses a risk of eroding trust in communication from influential leaders.

Furthermore, the potential for misunderstanding extends to other public figures and organizations. If AI detection tools incorrectly flag significant communications, it raises questions about the reliability of such technologies. Public skepticism towards both AI and the institutions that adopt these tools could hamper broader acceptance and effective utilization of AI in beneficial ways.

The Ugly: The Broader Context of AI Regulation

As the debate around AI continues to evolve, this incident underscores a pressing need for comprehensive AI regulation. Governments and regulatory bodies are grappling with the balance between fostering innovation and protecting citizens from potential harms associated with AI misuse. The Pope's anti-AI stance, when intertwined with AI detection failures, illustrates the broader societal concerns regarding AI's role in shaping discourse.

The ugly truth is that, while AI has the potential to enhance our lives, it also presents risks that must be managed. As seen in the case of the Pope, AI systems can inadvertently distort messages, leading to misinterpretation and a potential loss of trust in leadership. This incident may necessitate a reevaluation of existing regulations and the establishment of guidelines to ensure responsible AI use.

Market Context

The emergence of AI detection tools within the broader macroeconomic landscape reflects a growing recognition of AI's pervasive influence across sectors. Companies and institutions are investing significantly in AI technologies to enhance efficiencies and improve decision-making processes. However, as AI continues to infiltrate various aspects of life, from business to governance, the question remains: how do we manage its integration without sacrificing authenticity?

This scenario illustrates the dual-edged nature of technological advancement. While AI can create efficiencies, it can also threaten the credibility of communication. The response from regulatory frameworks and societal attitudes will be pivotal in shaping the future landscape of AI and its applications.

Impact on Investors

For investors, the implications of the Pope's AI-related incident extend into sectors focused on AI development and regulatory compliance. Companies that prioritize ethical AI practices and transparency are likely to gain favor among consumers and investors alike. As awareness of AI's potential pitfalls rises, businesses that can navigate these challenges effectively may see enhanced market positions.

Moreover, investment in AI detection technologies and platforms that focus on ethical AI could present opportunities for growth. As regulatory scrutiny increases, companies capable of demonstrating their commitment to responsible AI use may attract investment more readily, positioning themselves as leaders in the emerging ethical tech landscape.

Looking Ahead

As we move forward, it is crucial for both the public and private sectors to engage in open discussions about AI's role in society. The incident involving the Pope serves as a reminder that while technology can enhance communication, it also requires careful consideration to ensure that the messages conveyed remain genuine and trustworthy. Investors, regulators, and technology developers must work collaboratively to navigate the complexities of AI's integration into our daily lives, ensuring that the digital future remains a reflection of human values and ethics.

In summary, the intersection of AI, authenticity, and regulation is a landscape fraught with challenges but also rich with opportunities. As we scrutinize these developments, understanding their broader implications will be essential for fostering a sustainable and ethical technological future.